Generative AI is now woven into the daily life of schools. It’s in students’ and teachers’ pockets, on their devices, and … and in their writing… increasingly it’s a central part to the way we work in schools.

In that context, rightly, one line keeps surfacing in meetings, policy drafts, and conference keynotes: “We must use AI ethically.”

But I’m increasingly uneasy with how easily we say “use AI ethically” – as if the word ethically in and of itself alone does the heavy lifting for us.

It definitely feels right to say it…

If your educational institution has an AI policy or guidelines in place, it probably refers to the importance of ethical practices or consideration or some such.

The Australian Framework for Generative Artificial Intelligence in Schools, for example, refers to such phrases 7 times with little in the way of explicit unpacking of how K-12 educators might systemically ensure ethical approaches are practically explored in classrooms.

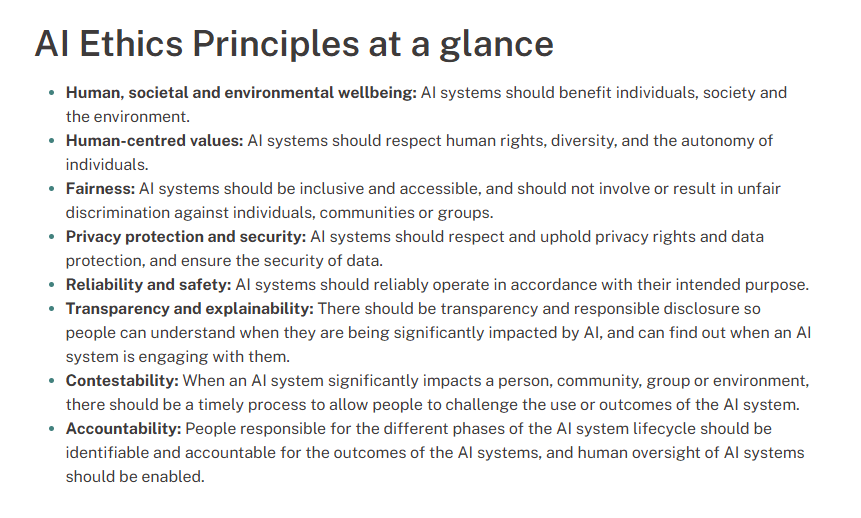

To be fair, however, the Framework does better than many documents. It links to a fullsome discussion of ethical AI use within Australia’s AI Ethics Principles – a document, in turn, developed by the Department of Industry Science and Resources. (It also references the Alice Springs (Mparntwe) Education Declaration.) Yet, the reality remains that, in the busy-ness of schools, “ethical” approaches are never unpacked. The phrase “ethical use” often functions like a verbal safety rail: reassuring, non-specific, and hard to argue with. It allows us to say we’re acting on ethics – and gives us the feeling that we’re doing so – without necessarily doing the real work in classroom practice. Such approaches are performative.

We have a responsibility to not be performative when it comes to ethics.

We have plenty of statements about ethical use of AI. In this blog I explore the idea that, perhaps, what our communities need right now are four questions.

Let’s pin the concept of AI ethics down…

The trouble is that “ethical AI” is rarely pinned down. I know few (if any) K-12 educators who have accessed Australia’s AI Ethics Principles. It’s a great document. (I’ve included a section from it below.)

While “we need to teach kids to use AI ethically” is widely stated, it’s become a catch-all response for a bundle of different concerns: integrity, privacy, bias, wellbeing, fairness, transparency. All worthy and legitimate concerns… but wrapped up in this way it’s hard to bring to the classroom. We have the documents. We need to bring them to life in our classrooms.

So if we’re serious about human-centred AI, we need more than bumper sticker, a number plate ‘motto’ , or a hollow cap slogan statements. Teachers need shared approaches and a shared vocabulary for classrooms.

Tools that can shape ordinary classroom decisions on any given teaching day, not just sweeping unexamined motherhood statements posted on our school websites or in policy documents.

When ‘ethics’ stays vague or buried in bureaucracy, practice drifts. Ethics must be lived.

In the messy middle of school life, vague ethics statements tend to produce a predictably poor outcomes. The unexamined ethics statement is little more than compliance theatre: lots of statements, some glossy posters and PDF booklets, some expensive website design, a dose of self-congratulations… perhaps some complacent self-delusions… and very little change in classroom practice.

Four questions to ground ethical AI

It’s hard to take the noble and right-minded thinking of Australia’s AI Ethics Principles into the world of the K-12 classroom. Let’s not, however, build another framework or policy document.

Rather than trying to win the “perfect paperwork” battle, I want to offer something I find more usable: four questions. Perhaps they might become a kernel for brining ethical AI into the classroom.

These four questions draw on ‘virtue language’ found in some deep traditions. They are not, however, provided here as abstract philosophy, but as a practical way to keep our decisions aligned with human flourishing.

When making decisions around AI in K-12 contexts, perhaps we might ask the following while we make choices.

- Is it wise?

- Is it just?

- Is it ‘balanced’?

- Is it courageous?

These questions aren’t magic. They aren’t new. But they are clarifying. They stop ethics being a fog and turn it into a lens.

Is it wise?

Wisdom.

Wisdom is practical judgement in context. Wisdom, in practice, is about purpose, discernment, and knowing what we’re trying to achieve with AI (and why).

It resists both panic and hype. It asks what matters, and chooses the right means for the right ends.

Wise AI use begins with purpose:

- Why are we using this tool here, now, with these students?

- What learning problem is it genuinely helping us solve?

- What human capability are we trying to grow?

Two lines from the Principles give us a strong “wisdom anchor”:

AI system objectives should be clearly identified and justified…

Throughout their lifecycle, AI systems should benefit individuals, society and the environment.

Wisdom asks us to respond not just the question “Could we?” but toe reflect on “Should we?”. Wisdom asks whether AI is being used for beneficial educational ends (learning, agency, truth-seeking) rather than for convenience, performativity, or a rush to appear “innovative”.

Wisdom keeps the human purpose in the driver’s seat.

Is it just?

To me, justice is about fairness, kindness, inclusion, and serving the common good. Justice is about dignity, inclusion, equity, and not quietly amplifying disadvantage.

The Principles are explicit about this:

AI systems should be inclusive and accessible…

A just approach to AI in K-12 asks:

- Who benefits from this practice – and who is burdened by it?

- Does it widen gaps between students with different access, confidence, language, or support?

- Are we protecting learning – or simply chasing grades or protecting the reputation?

- Are we treating students as persons, or problems to be managed?

Justice is also about the culture we build. Justice asks: who benefits, who is burdened, and who is left behind? It adds the classroom posture: we set boundaries and expectations without humiliation, suspicion, or “gotcha” tactics to supposedly support academic integrity.

A just approach sets clear boundaries, explains the why behind limits, creates space for repair when students cross lines, and preserves the dignity of both students and teachers. It supports both accessibility and challenge. It is both responsive and demanding.

Is it ‘balanced’?

Moderation is the virtue of balance. It asks when enough is enough. It keeps the human in the loop.

In a system easily seduced by efficiency, moderation quietly insists not everything should be automated. Moderate or ‘balanced’ use of AI in K-12 is about limits.

Moderate AI use asks:

- What must remain irreducibly human here?

- Are we using AI to create more time for relationship and meaning-making – or to avoid it?

- Are we optimising for speed, for grades, for data, for convenience… or for learning?

AI systems should be designed to augment, complement and empower human… skills.

Balance protects development. Balance asks whether AI is supporting human growth or quietly replacing it. It’s the difference between AI as scaffold (for thinking) and AI as substitute (for thinking).

There are moments when we actually want students to carry the cognitive load: grappling with a concept, wrestling with a source, holding a tension, finding their own words. Not because learning must be hard, but because it must walk the line between challenge and accessibility. It must walk a tightrope.

Moderation helps us say:

- “Yes, use AI for brainstorming,” but you must still craft the argument.

- “Yes, use AI for feedback,” but you must decide what to accept and why.

- “Yes, use AI for study,” but you must show what you understand without it, too.

A sense of balance creates a human-centred boundary line.

Is it courageous?

Courage is the virtue that resists both fear. Courage is not bravado. It’s not certainty. It’s the willingness to face reality – including the reality of uncertainty in a rapidly changing world – and still act with hope, integrity, and purpose. It’s also the courage to remain our fully human, relational, and authentic selves.

This is the quieter, more personal courage required of teachers right now: the courage to be fully human and present with our students… and the courage to challenge them to be fully present in their learning and growth as people.

Courage chooses authenticity over theatre.

Courage says to students:

- “Here’s what we value.”

- “Here’s what we’re worried about.”

- “Here’s what we’re trying.”

- “Here’s how we’ll keep each other honest – together.”

Courage asks:

- Are we preparing students for their future, or trying to preserve our past?

- Are we willing to take risks to redesign learning rather than merely collect grades and police behaviour?

- Are we building agency, or just compliance?

AI will tempt some students, teachers and schools into performativity: polished outputs, automated feedback, neat compliance narratives, and the illusion of control.

If we want education to remain socially melioristic – oriented toward improving the world, not merely surviving it – then courage matters. We have to engage the challenges and opportunities of this moment, rather than retreating into fear or nostalgia.

Courage is choosing transparency over secrecy, formation over policing, and honest human presence over institutional performance. It’s also the courage to build cultures where students can question, challenge, and seek redress rather than simply comply.

There should be transparency and responsible disclosure…

human oversight of AI systems should be enabled.

Ethics beyond assessment

Assessment often becomes the flashpoint because it forces decisions quickly. But the ethical use of AI in schools is bigger than assessment and academic integrity discussions.

These four questions should shape:

- lesson design and learning routines

- inquiry and research (including truth, evidence, and verification)

- drafting, writing, and creation

- feedback and tutoring

- classroom dialogue and sensemaking

- staff professional learning and shared norms

- communication with families and communities

In other words: ethics isn’t the appendix. It’s the architecture. It’s core to every decision and action taken at all levels of K-12 schooling – and beyond.

When a school can consistently ask “Is it wise? Is it just? Is it balanced? Is it courageous?” it becomes possible to design AI experiences and use that is coherently human-centred and educationally defensible – without sliding into either prohibition or buying into hype.

A final thought…

We don’t need a single universal AI ethics statement that will satisfy every stakeholder.

But we do need something simpler, and harder: ways of having the conversations that keep us honest.

So when we say “ethical AI”, perhaps we should stop speaking in slogans and start speaking in questions:

Is it wise?

Is it just?

Is it balanced?

Is it courageous?

If we can’t answer those questions in a way that guides real classroom decisions, then the technology will quietly decide our schools’ futures for us.

And that would be the least human-centred outcome of all.

You must be logged in to post a comment.