The recent MIT Media Lab study Your Brain on ChatGPT made headlines for all the wrong reasons. Accusatory articles and alarmist social media posts latched onto a simplistic narrative: that AI tools like ChatGPT are, in short, ‘cognitively corrosive’. But the real story is far more interesting and far more relevant to those of us working in classrooms today.

But let’s start at the beginning. In June, a team of MIT researchers (including lead author Nataliya Kosmyna and seven others) published a paper titled Your Brain on ChatGPT: Accumulation of Cognitive Debt when Using an AI Assistant for Essay Writing Task. The paper argued – among other things – that participants who used ChatGPT to write essays from the outset were less engaged cognitively, demonstrated weaker memory recall, and wrote with diminished ownership.

This finding, despite being couched in some very careful language choices and contextualised with some very thoughtfully considered limitations, led to a huge number of clickbaity headlines about AI “making people dumber” or that it “can rot your brain”.

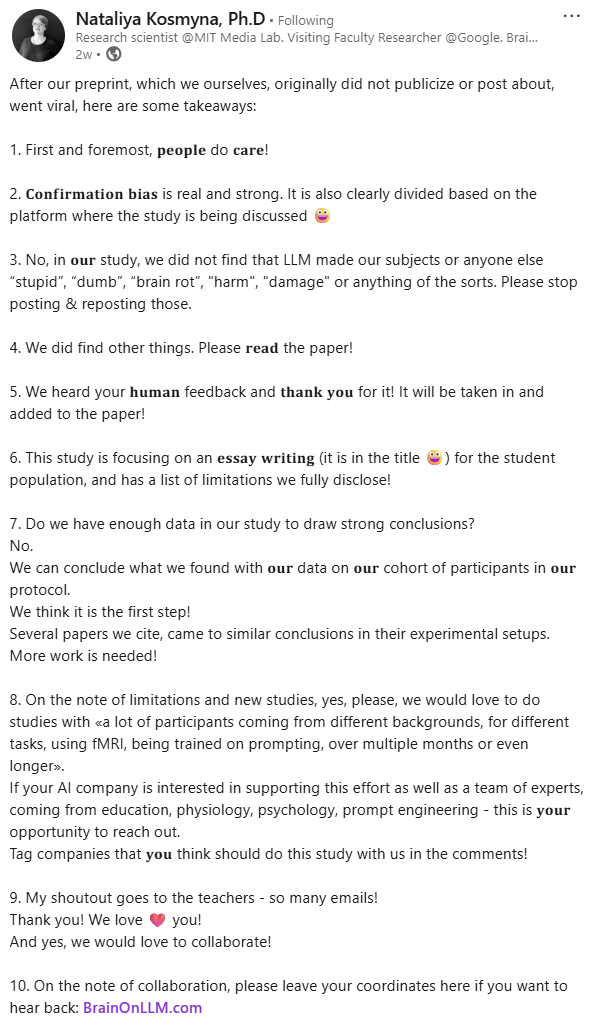

The research paper, or rather, decontextualised snippets of it, very quickly went viral. This virality took on a life of its own – so much so that Kosmyna felt the need to redirect the online chatter towards some of her key takeaways. (You can see these comments here.)

Noteworthy in the comments made by Kosmyna is one in which she notes the “confirmation bias” emerging in discussions around the article. To me, the viral response to the MIT study reveals something of our collective tendency toward panic and polarisation in the face of educational change. Reactions to the study often reflected pre-existing biases – whether techno-optimism or doom. As educators, we must resist such simplistic takes.

Let’s get some findings straight…

Less widely reported than the above finding (that participants who used ChatGPT to write essays from the outset were less engaged cognitively, demonstrated weaker memory recall, and wrote with diminished ownership) was another.

To me, it’s highly significant that participants who first attempted their essays independently and then turned to ChatGPT to refine or extend their work showed significantly higher neural activity, better recall, and more strategic use of AI-generated suggestions. The researchers termed this sequenced approach “Brain-to-LLM”.

Brain-to-LLM participants could leverage tools more strategically, resulting in stronger performance and more cohesive neural signatures. (Kosmyna et al., 2025, p.140)

The MIT paper was never about AI causing “brain rot” or cognitive damage. If it did, I would have been very surprised. The research would certainly have been headline worthy! BUT I was largely unsurprised by the core findings of this research. The paper, to me, was ultimately about sequencing cognition.

When students think first, struggle with ideas, and begin to develop their understandings before introducing an AI tool, the cognitive effort involved appears to leave deeper memory traces, stronger neural connectivity, and more meaningful learning.

Taken together, these findings support an educational model that delays AI integration until learners have engaged in sufficient self-driven cognitive effort. Such an approach may promote both immediate tool efficacy and lasting cognitive autonomy. (Kosmyna et al., 2025, p.141)

In other words, to me, a key finding of the paper was that educators shouldn’t focus on AI itself – rather, it’s the timing and purpose of its use that matters.

A bit more about the study…

The study tracked cognitive engagement across several writing sessions using EEG readings. Participants were basically grouped into three cohorts: a LLM group, a Search Engine group, a Brain-only group, where each participant used a designated tool (or no tool in the latter) to write an essay. The study had 54 participants who took part in 3 sessions. Notably, 18 participants took part in an optional Session 4. I found Session 4’s findings especially interesting.

We used electroencephalography (EEG) to record participants’ brain activity in order to assess their cognitive engagement and cognitive load, and to gain a deeper understanding of neural activations during the essay writing task. We performed NLP analysis, and we interviewed each participant after each session. We performed scoring with the help from the human teachers and an AI judge (a specially built AI agent). (Kosmyna et al., 2025, p.2)

A key finding of note was that those who had begun their writing journeys without AI and only later used ChatGPT demonstrated both stronger neural connectivity and more accurate recall. Importantly, it’s suggested that this group’s ability to quote from and reflect on their earlier work showed that they had made cognitive investments early – and it paid off.

The researchers linked this difference to the concept of cognitive debt: the extent to which students either bypassed or embraced the cognitive effort required for authentic sense-making and memory formation.

How to integrate LLM use in ways that reduce the accumulation of cognitive debt: an idea we should be talking about

The paper introduces a crucial idea: “the accumulation of cognitive debt, a condition in which repeated reliance on external systems like LLMs replaces the effortful cognitive processes required for independent thinking” (Kosmyna et al, 2025, p.141).

While to me this term was new, this concept isn’t unfamiliar in the lived experience of history teachers.

As history teachers, we often see cognitive debt accumulating when students skip grappling with a process of sense-making and rush towards creating a response that’s heavily based upon the undigested ideas of others… the process of thoughtlessly regurgitating the notes of the teacher, textbook or website.

In essence, the MIT paper made a preliminary finding that when LLMs are used from the outset, learners may outsource the very struggles that lead to deep understanding.

To me this is no surprise. I would argue that their finding indicates that some students are quick to misuse AI just as they ‘always have done’ but with other sources!

To me, a takeaway from the research for secondary school history teachers is that AI use in early assignment stages should be carefully scaffolded.

While students should be encouraged to use AI transparently for some basic tasks (perhaps defining concepts, clarifying instructions, identifying research directions, self-differentiating their learning), tasks requiring critical thinking (those involving analysis, synthesis, evaluation, drafting considered responses) should remain human-led until the learner has done – and demonstrated that they have done – the hard foundational cognitive work.

Teach thinking … and the strategic, effective and ethical use of AI

The MIT research supports “an educational model that delays LLM integration until students have engaged in substantial self-driven thinking” (Kosmyna, 2025, p.141).

In response to this statement, teachers need more effective checks for understanding (CfUs) to ensure that substantial self-driven thinking has taken place. I suspect such checks have largely been lacking (remain largely lacking?) in schools.

Such checks might be especially powerful in the early stages of research tasks – especially in subjects like history.

Drawing upon my experiences in flipped learning approaches (including the work of Eric Mazur and Jon Bergmann), I recommend the following principles and approaches that could have a significant positive impact in student learning from research assignment. While I know that have a positive impact in my history classes, I suspect they may have application in contexts far beyond it.

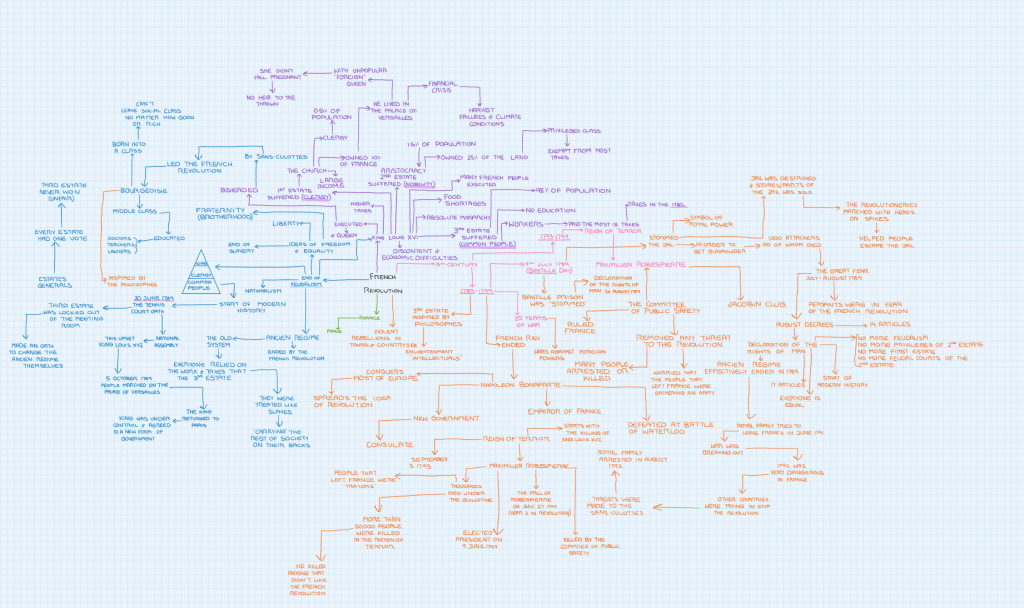

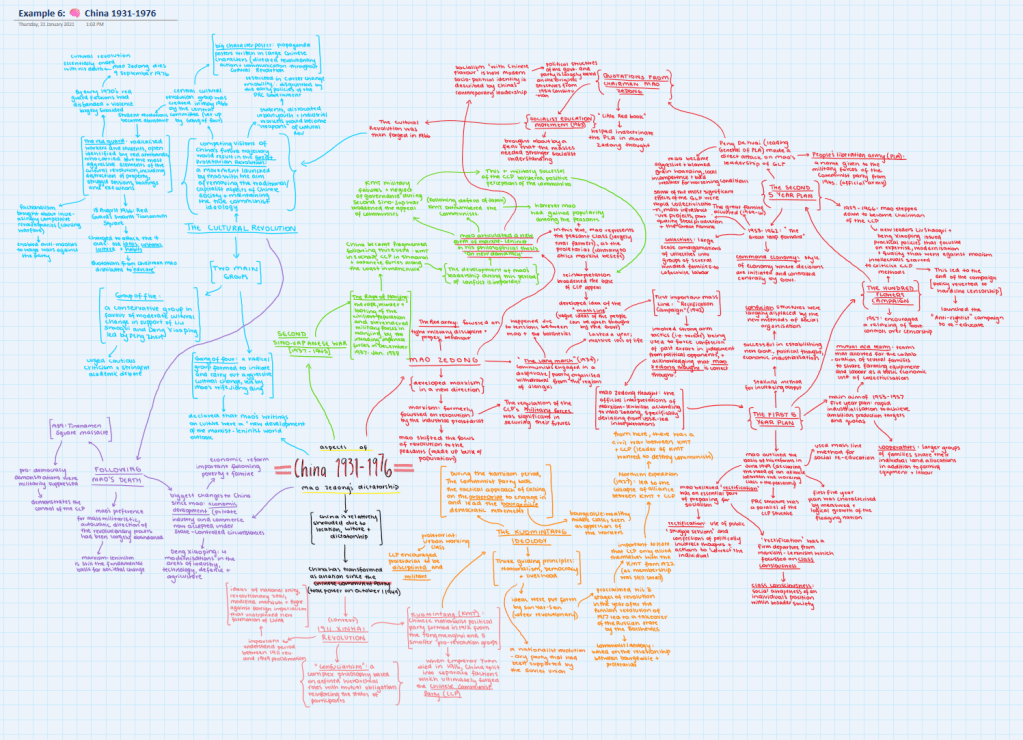

- Do sense-making checks early and often: Require students to engage in unscripted, screen-recorded show-and-tells in which their thinking becomes visible and their understandings of foundational elements of the work are demonstrated. In this process I use Flip ‘assignments’ (formerly Flipgrid) within Microsoft Teams*. In these checks for understanding (CfUs) perhaps ask students to talk through their topic selection, early research, and notetaking. I usually ask students to demonstrate their understandings screen-recordings of about 5 minutes length. In these they talk through the mind-maps that they’ve make in their OneNotes. These mind-maps are “mental models” and self-created graphic organisers. In these CfUs, students narrate their thinking aloud – what makes sense, what doesn’t, where they’re struggling. They make observations and ask questions. These CfUs are ‘ill-defined’ problems. They are, by design, open-ended. This allows students the space to demonstrate their abilities as they to grapple with uncertainty and as they authentically articulate their grasp of foundational knowledge and skills.

- Require explicit evidence of non-LLM research: Often my students’ first CfUs are expected to be very content laden. In these, students are primarily expected to demonstrate their knowledge and understanding of concepts underpinning our topic of study. Meaningful interpretation, analysis, and evaluation of sources rests upon a command of substantive content knowledge. In subsequent CfUs, students are required to build upon their initial processes of making sense of content by demonstrating that they can consult and make critical use of non-AI sources. In these CfU checkpoints, students are required to demonstrate that they can locate, identify, interpret, analyse, evaluate, and draw from credible sources such as libraries, museums, archives, and academic databases. In these CfUs, they are also expected to demonstrate that they can make wise, varied, and considered use of websites of a variety of types ranging from Wikipedia (in lateral reading) to Google Scholar (for finding find academic articles, research papers, books, and conference papers). These checks for understanding should include not just what students found, but reflections on how they found it. Students should – literally – show their research process in their narrated screen-recordings. This might include their mind maps, their favourited browser tabs, the notes they are making, the sources they are annotating. CfUs should tell the story of their engagement in the learning process.

Remember, such CfUs should be ‘ill-defined’ problems. They should be, by design, open-ended. This allows students the space to demonstrate their abilities as they to grapple with uncertainty and as they authentically articulate their grasp of foundational knowledge and skills.

- Ask for demonstrations of and reflections on AI use: Checks for understanding should also require students to be transparent in their use of AI. It’s important that they demonstrate how they use AI tools in the Brain-to-LLM mode. They should be expected to explain when, how and why they consulted an LLM in conjunction with other tools. Students might explore within a CfU screen-recording what they asked of the AI in their initial prompts, the structure of their prompts, the process by which they engaged in multi-turn iterative chats to clarify, challenge, and elaborate on AI responses, and how their AI use has shaped their thinking. It might include processes of factchecking, reflection on emerging biases, and examining perspectives. When students use AI, it’s important to encourage students to work in a hybrid mode with AI to supercharge their learning. I like to encourage a process I call: HI + AI (Human Intelligence plus Artificial Intelligence).

(Sorry Talking Heads!) Don’t Stop Making Sense: Toward an AI-infused flipped pedagogy

AI can and should serve different roles at different stages of learning but we need to recognise that, as the Kosmyna et al. caution, “there may be a trade-off between immediate convenience and long-term skill development” for students (p.116). Therefore, we need to develop pedagogies that are AI-infused in ways that are intentional, nuanced, and strategically scaffolded.

A flipped approach to learning model may be part of the answer – but not just in how such models deliver content.

The emphasis of an AI-infused learning model must be firmly on checking for understanding and the process of sense-making, not just providing content or the provision of agentic chatbots. As Eric Mazur has long argued, learning requires learners to make sense of information, not merely to receive or reproduce content.

Sense-making is effortful, and AI tools must not shortcut this process.

Checks for understanding are the flipped classroom’s critical mechanism. They ensure students have engaged with content actively. These checks can be recorded, but they should also be human and face-to-face. They must encourage the types of cognitive engagement that AI alone cannot simulate.

Effective checks for understanding are grounded in the principles of cognitive science and explicit instruction, as advocated by researchers like John Sweller, Nathaniel Swain, Doug Lemov, and Barak Rosenshine. Sweller’s work on cognitive load theory highlights the importance of managing working memory through strategies such as worked examples and completion tasks, which help students internalise new material without cognitive overload. Swain and Rosenshine emphasise the role of guided practice, frequent review, and elaborative questioning – techniques that help make student thinking visible. Lemov’s approach adds the power of cold calling and retrieval practice, encouraging active engagement and accountability while offering teachers real-time insight into student understanding. Paired with metacognitive tools like think-alouds, success-criteria-based self-explanations, and visual frameworks such as graphic organisers, these approaches provide rich, timely information about student learning. Importantly, they share a common goal: making thinking public, effortful, and improvable – so that teaching is adaptive, and learning, truly embedded… and demonstrated.

One powerful and culturally responsive approach to checking for understanding is the use of yarning circles, aligned with the 8 Ways Indigenous pedagogy framework. Yarning circles prioritise relational, dialogic learning – creating a space where students can articulate their thinking, listen deeply to others, and refine understanding through collective sense-making. In the context of Brain-to-LLM approaches, yarning circles offer a deeply human method for students to demonstrate thinking, explore uncertainties, and express how they’ve begun to form connections between content and context. Well-run yarning circles offer opportunities for explorations of sources, perspectives, biases, and even the place of AI within the learning process. This method reinforces that understanding is not just internal but communal, constructed through dialogue and reflection, aligning seamlessly with both flipped learning principles and Indigenous ways of knowing.

Strategic hybrid thinking: HI + AI

To me, the most compelling insight of the MIT study was this: Brain-to-LLM participants performed better because they had engaged in their learning and developed richer internal frameworks before substantively engaging with LLMs. Their re-engagement with AI tools activated not passivity, but reflection, elaboration, and control. Their brains lit up.

As the paper concludes:

Going forward, a balanced approach is advisable, one that might leverage AI for routine assistance but still challenges individuals to perform core cognitive operations themselves. (Kosmyna et al. 2025, p.116).

This echoes Ethan Mollick’s call for thoughtful engagement with AI: we need to avoid the extremes of AI hype or AI panic. Mollick warns that when AI is used to skip thinking, we undermine learning. But when it’s used to interrogate our thinking, to challenge, refine, and extend, it becomes a powerful amplifier of student cognition.

Even lead author Nataliya Kosmyna pushed back on the study’s misrepresentation. In a recent post, she clarified that the research does not claim AI harms the brain, nor does it reject AI outright. Rather, it invites educators to consider when and how students should use AI to enhance – not replace – their thinking.

This is my challenge to teachers:

- Build ongoing checks for understanding.

- Design assignment processes that reward early thinking and visible sense-making.

- Model the hybrid approach: HI + AI.

And, above all,

Trust that our students’ cognitive potential grows when we create space for them to struggle, explain, and own their thinking.

Finally, let’s revisit one of my starting observations: Confirmation bias is everywhere – even in AI discourse. The viral response to the MIT study reveals something of ourselves: our collective tendency towards polarisation in the face of AI’s disruptive impact on education.

As educators, we must resist simplistic takes on AI in education.

The real story behind the headlines about ‘that MIT study’ wasn’t that AI is dangerous.

It was that thinking matters.

* There’s nothing special about this platform. This process could be done in many platforms – all that is required is a screen-recording tool, such as Screenpal, and a platform for submission of video files as is offered in many LMSs.

You must be logged in to post a comment.